How to evaluate whether your scenarios are practicing the right cognitive skill.

You’ve just finished building a scenario. The character feels real, the situation and options are plausible. You’re satisfied with it. Are you also crystal-clear about what you actually asked people to do? Before asking whether a scenario is well-crafted, it helps to ask whether it’s practicing the right thing at all. Are you prompting recognition or judgment?

The gap between recognition and judgment

Transfer-appropriate processing (Morris, Bransford & Franks, 1977) gives us a useful lens: learning transfers when the cognitive operations practiced during training match those required during performance. If what you practice is fundamentally different from what the job requires, transfer is less likely.

Recognition tasks have a correct answer identifiable by matching an option to a known principle. They’re a valid learning format when you’re aiming for retrieval practice. Judgment tasks require what Paris, Lipson and Wixon (1983) called conditional knowledge: knowing when and how to act, not just what the right action looks like in the abstract. You can’t develop conditional knowledge without practicing the judgment itself.

A scenario can look like judgment practice while asking for nothing more than recognition. The difference lives in the cognitive demand of the decision point, not in how realistic the situation feels.

It’s also worth separating two things that are easy to conflate: whether a decision point requires recognition or judgment is a function of how the options are written. Whether the consequence is instructional or intrinsic is a feedback design choice. These are independent variables. You can have a recognition-level decision with fully intrinsic consequences, or a genuine judgment task followed by a narrator verdict. Both dimensions matter, but they can fail independently.

What this looks like in practice

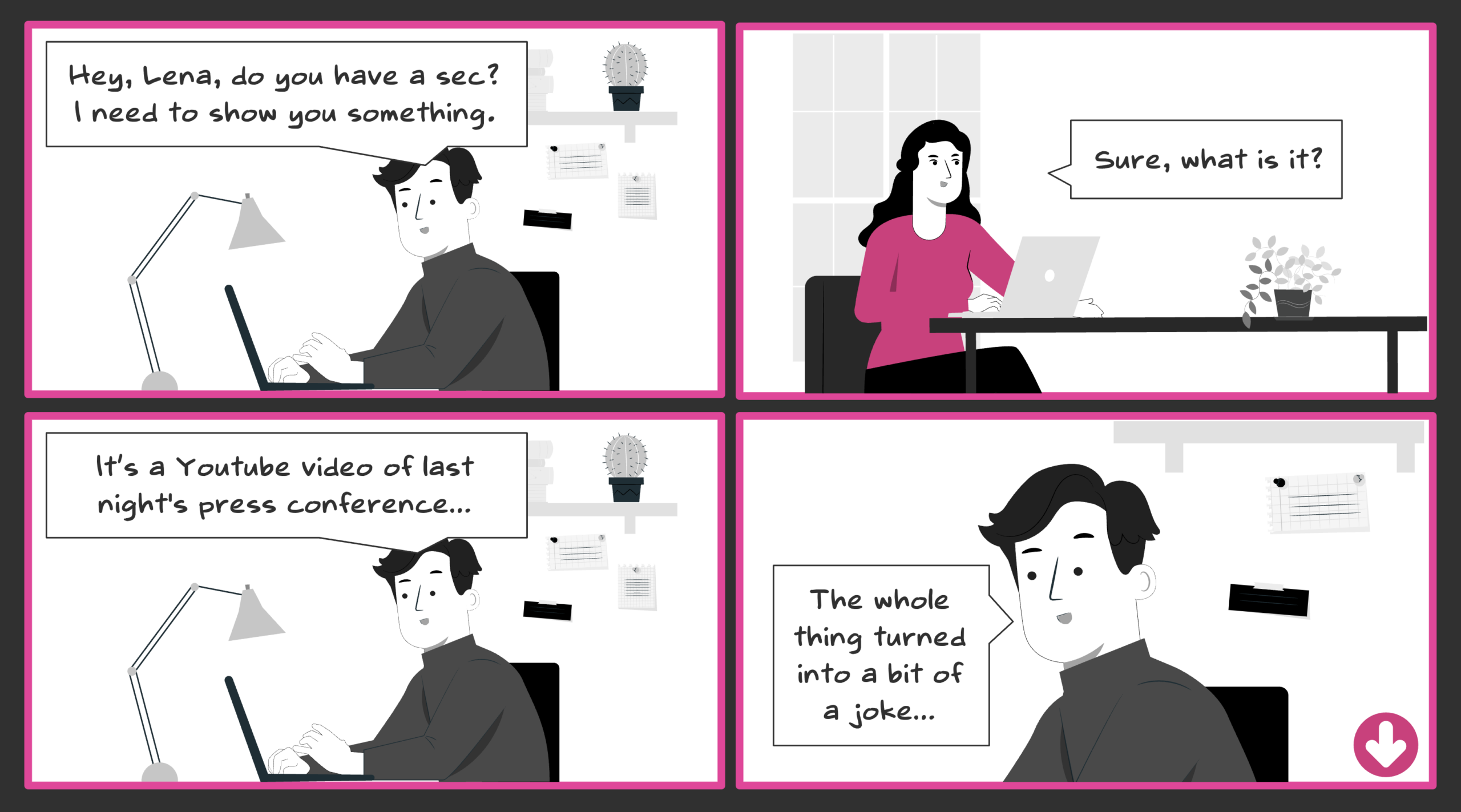

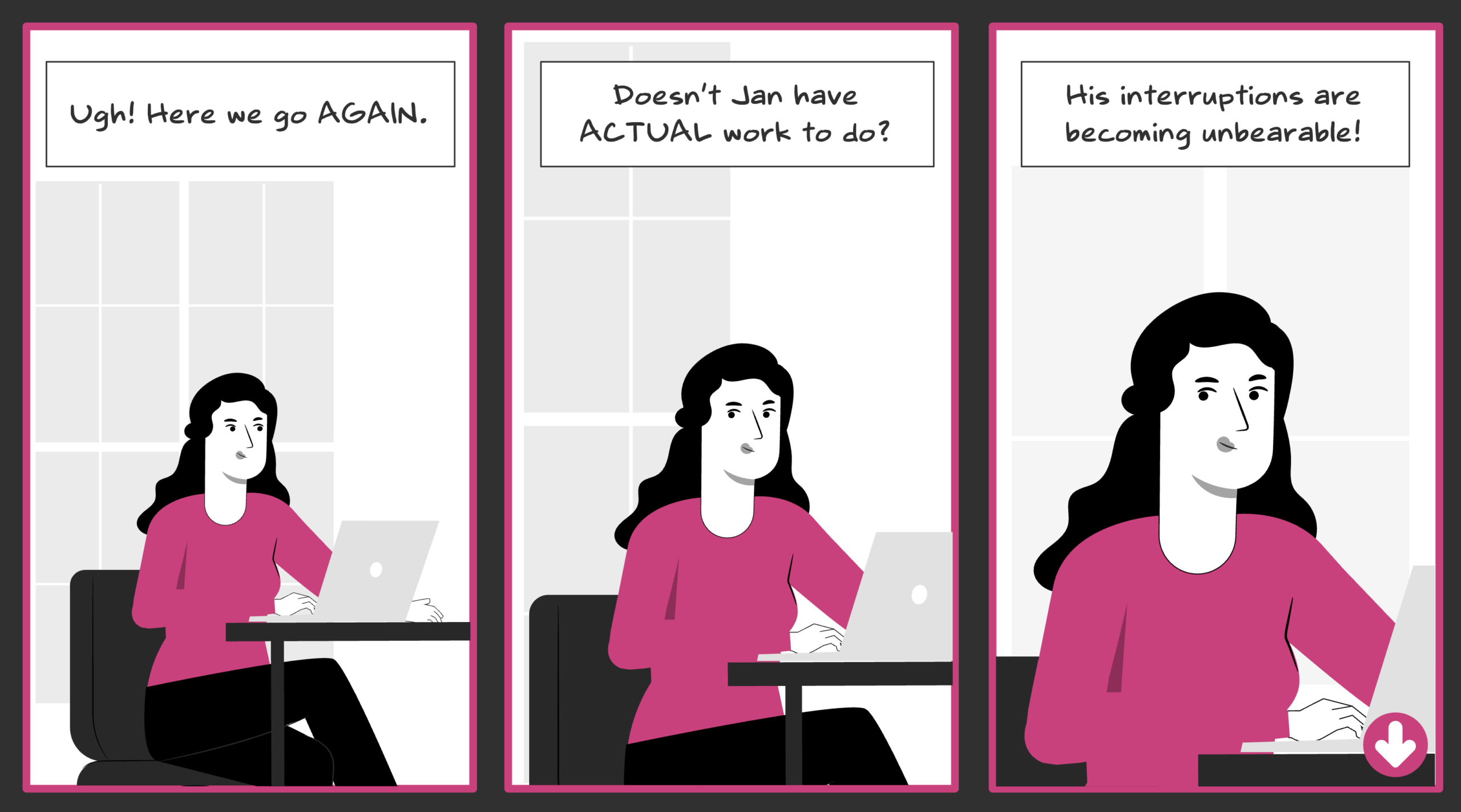

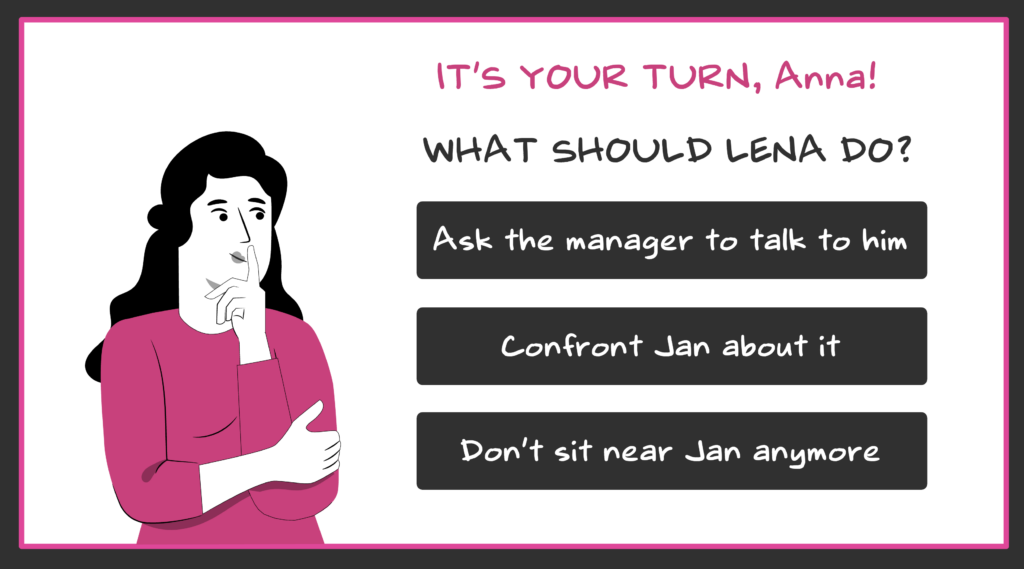

To make this concrete, I’m going to look at two decision points from one of my own interactive stories. Speak or Sink is an interactive story about assertive communication at work, designed for young women navigating workplace dynamics and social conditioning. The main character is Lena, a young graduate. In Scene 1, her colleague Jan keeps interrupting her work.

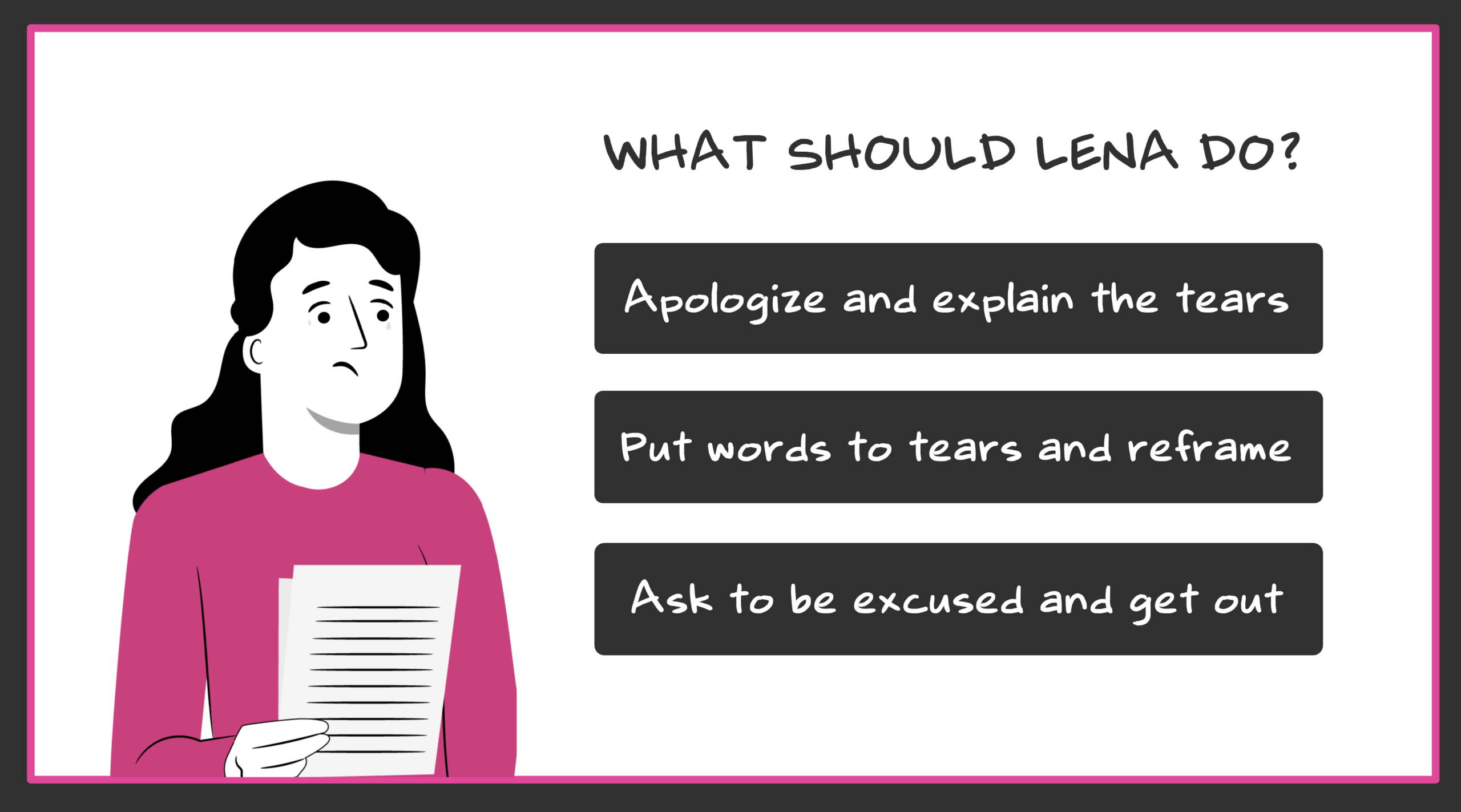

A recognition version of this situation would announce the correct answer by name, “assertive communication” is the topic of the story. The wrong answers are identifiable by type without knowing anything else about the relationship, or the workplace. And the feedback would say something like: “Incorrect. In this situation you should address the issue directly,” replacing a consequence with instruction.

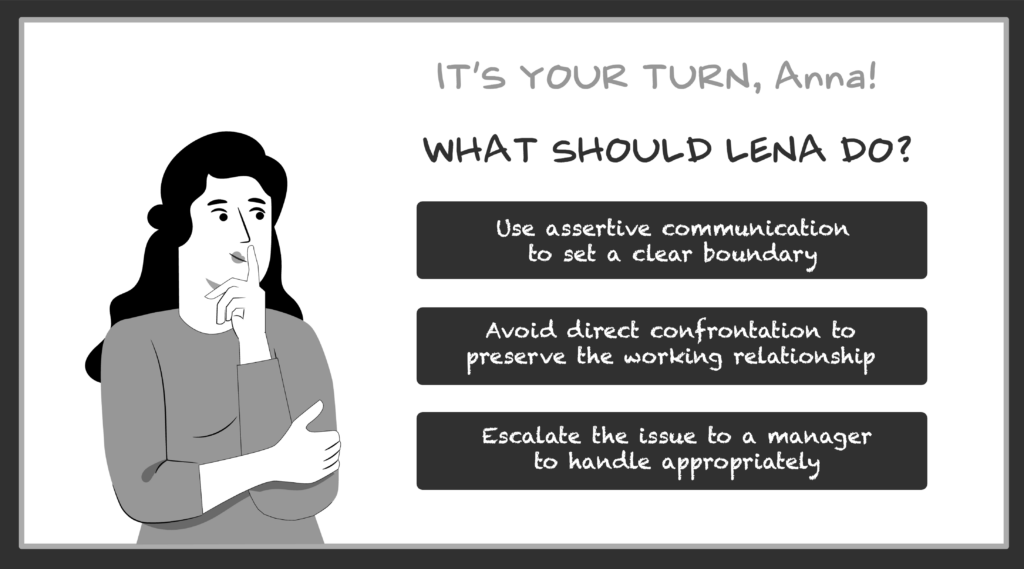

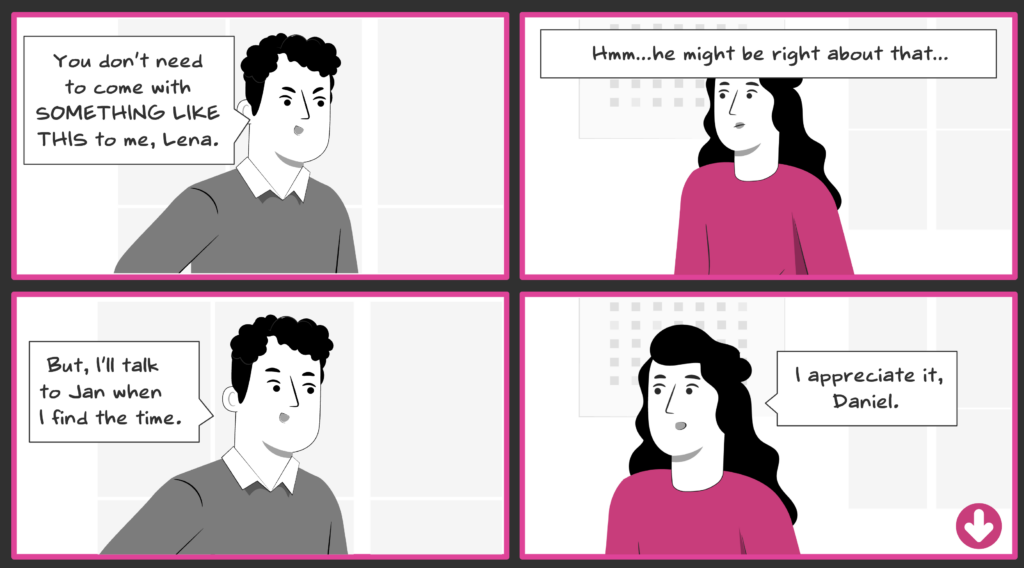

In the original decision point none of the three options announces itself as obviously wrong. A user can form a general preference, but can’t confidently rank them without reading the specific situation. When the user chooses “Ask the manager to talk to him,” Daniel responds: “You don’t need to come with something like this to me, Lena.” A specific, socially real consequence with no principle explained or narrator verdict. The situation is doing the work.

Christy Tucker draws a related distinction between categorisation questions and action questions. A categorisation question asks people to identify or classify, it’s closer to the Level 1 example above. An action question asks them to decide what to do next within the specific situation. Her point is that options need to stay tied to the concrete characters and details, not abstract to the general process.

The Scene 1 option “Confront Jan about it” is still on the abstract side of that line: the user doesn’t know what confronting Jan looks like until the consequence reveals Lena’s actual words. Here the abstraction is carried by strong consequence design, the situation delivers what the option withholds. Moving that specificity into the option itself would push the decision toward genuine conditional knowledge even further.

Christy also notes that categorisation questions have a legitimate place as practice or assessment questions. So, it’s okay to use abstract options, the problem is mistaking recognition for judgment practice, or making recognition the only cognitive work in a course that claims to build a behavioural skill.

Three dimensions for evaluating decision points

- Decision demand is whether the option text carries the answer or whether the user has to read the situation to decide. This is the first thing to check, because if the options are self-announcing, nothing else matters.

- Situational fidelity is whether the user needs to read the specific dynamics of this situation, or just the general type. A scenario can feel realistic without actually requiring situational judgment.

- Consequence authenticity is whether the verdict arrives from the situation or from instruction. This dimension is independent from the previous two: a well-designed decision point can still be undermined by a narrator explaining what went right or wrong, and a recognition-level decision can be followed by fully intrinsic consequences.

I combined these three dimensions into five levels of decision design, ranging from knowledge checks dressed as scenarios at Level 1 to full conditional knowledge practice at Level 5. It’s a practitioner construct, not an empirically validated framework.

Applying the dimensions to a real example

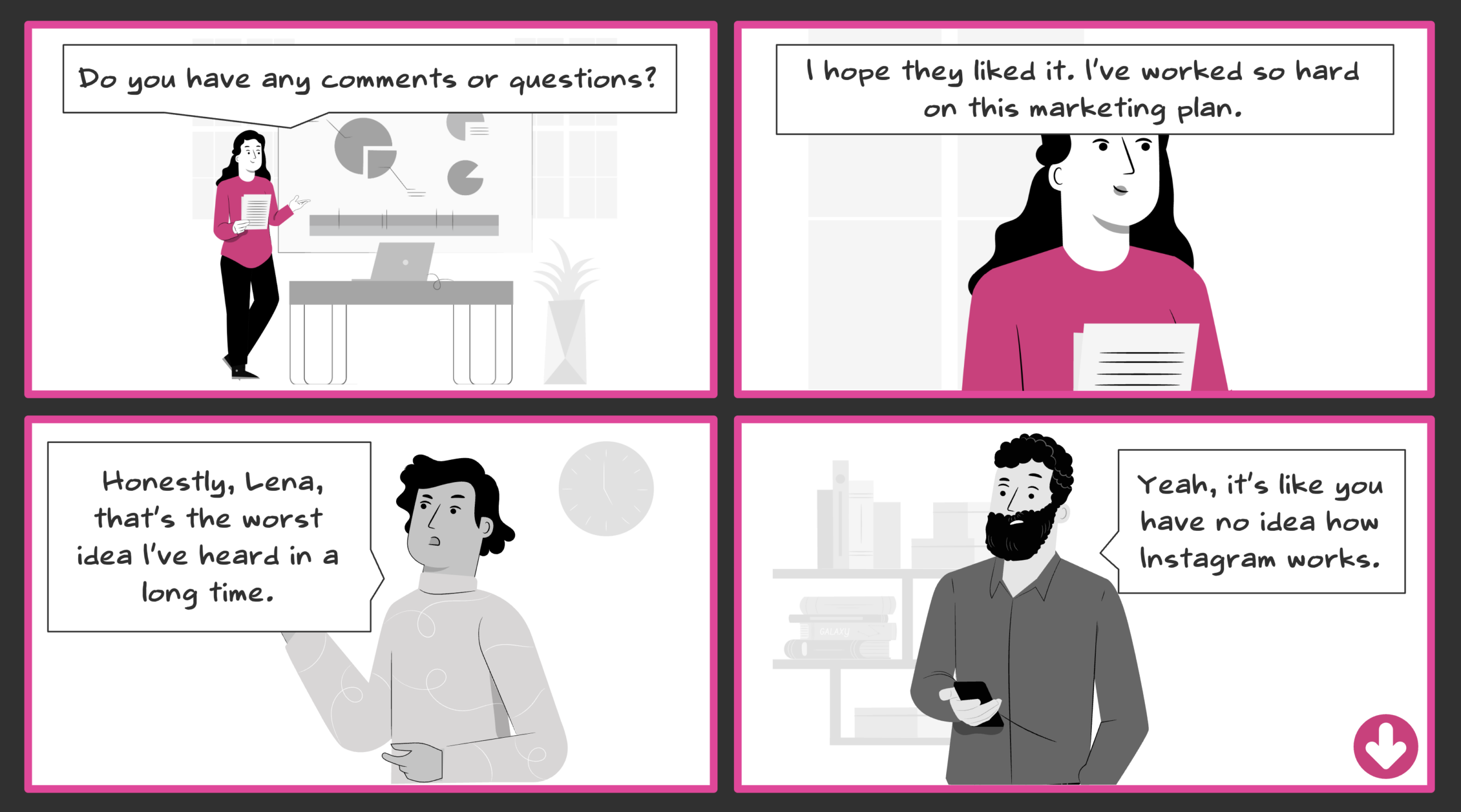

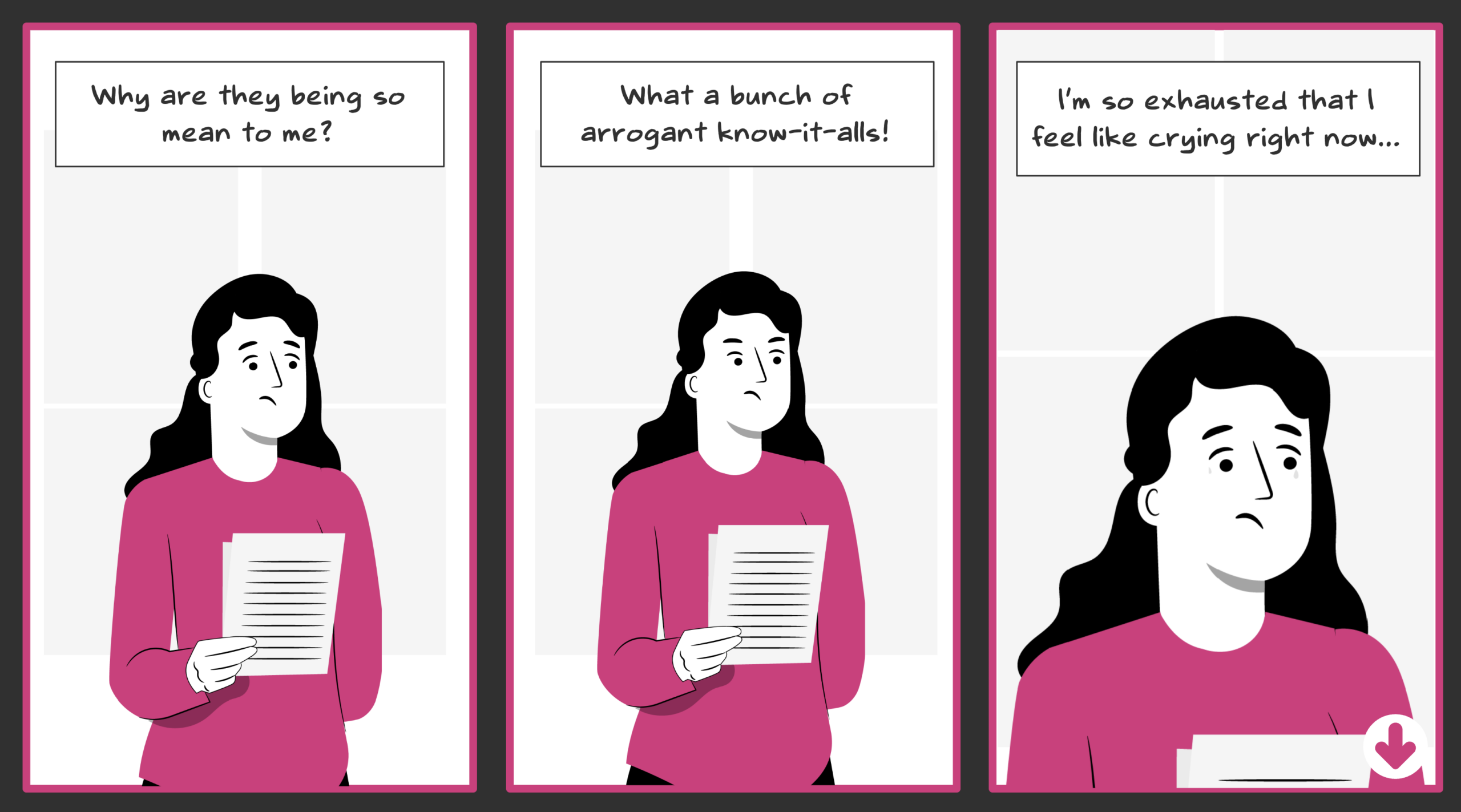

Decision demand is close to level 4. The options signal a specific type of move but stay somewhat abstracted — the user chooses a strategy, not a situated action. The exact words appear only in the consequence screen. Abstracted options are especially a problem when they don’t lead anywhere consequential.

Situational fidelity is between level 3 and 4. The setup is specific and emotionally grounded. But Daniel’s role isn’t restated at the decision point. A user who has lost track of the character relationships is missing information that would sharpen the judgment. One line before the decision closes that gap.

Consequence authenticity is at level 4 to 5. The situation delivers the verdict completely — Steve’s comment, Daniel’s break, Tom’s interruption. No narrator explains the principle. The user reads the outcome the way they would read a real room.

The swap test

Die post about character driven learning experiences introduced a swap test: could you replace the character or situation without changing what people need to learn? If yes, it’s probably decoration. A version applies here.

Could you replace the decision point with a question about the underlying principle and lose nothing essential? If yes, the scenario is doing a knowledge check, not judgment practice. The same logic applies to options: could you swap the characters and setting without rewriting the choices? If the options would work in a different scenario on the same topic, with different people and a different context, they’re probably not doing situational work. Effective scenario design not only looks like real life, it also requires what real life many times requires: Judgment.

Key Research: Clark, R. C. (2012). Scenario-based e-Learning (Clark, 2012); Transfer appropriate processing (Morris, Bransford & Franks, 1977); Conditional knowledge (Paris, Lipson & Wixon, 1983); Categorisation and action questions (Tucker, 2024 – online).

Developed in dialogue with Claude Sonnet 4.6 (Anthropic). All original concepts and final conclusions are my own.